A World Without Pink Algorithmic Bias Explained

Our moms are our biggest superfans.

I’m talking standing ovations at the school play, a closet full of goodies procured at the school fundraiser, and what may as well have been recurring ads in the local newspaper to broadcast our college acceptance letters, first jobs, and first promotions.

I once sang a version of “Whatever Lola Wants” at age 11 to audition for the school play. The faces of cringe from the audition panel still haunt me; my mom thought I showed real Broadway talent.

The point here is not to say that our moms’ love is real and unconditional; it is, and it’s a massive part of our professional success. The point here is to impress upon you the gravity of the problem, the size of the chasm, the grossness of the misunderstanding I realized existed last week when my mom read our last blog post.

After reading the last line, where we implored the reader to study math and statistics as a moral imperative, my mom looked up at me, eyes bleary, and said “wait, I don’t get it, why math? And, what does statistics have to do with it?”

Well, absolutely everything.

Large language models (LLMs) are trained using a combination of statistics and probability, which tell the machine what to predict by leveraging the vast breadth of published and available written and visual materials. This has led to intense breakthroughs, paving the way for what may be the greatest innovation in human history.

But if statistics and probability determine the outputs of the machines shaping our future, the inputs that shaped our past—which power these machines—threaten to create even greater inequality unless actively mitigated.

Here’s a really basic, theoretical example to make that real and show just how powerful that is as a proposition.

Algorithmic Bias Explained to a 5-Year-Old

Let’s say that Janie is training an AI tool; let’s call it Rainbow. She wants to improve her creative output and that of her peers as measured by the number of coloring book pages completed per day. To train the model, Janie uses the coloring book pages of all the students in her school. But for some reason, there are very few pink crayons in school. They have more of a blue and green color palette available at VC Elementary School.

So when Janie and her school friends start to use Rainbow, Rainbow only knows what it knows, so it produces illustrations that are predominantly blue and green, followed by red, orange, and purple and little to no pink.

Rainbow isn’t anti-pink, and neither is Janie, but Rainbow learned from the crayons at VC Elementary School, where they are very low on pink. And so it thinks that this isn’t a very pink world.

One could reason: that’s not such a big deal, especially because VC Elementary School likes more cool hues. But the challenge is that Rainbow doesn’t just keep a hint of pink; over time, pink vanishes. Here’s how:

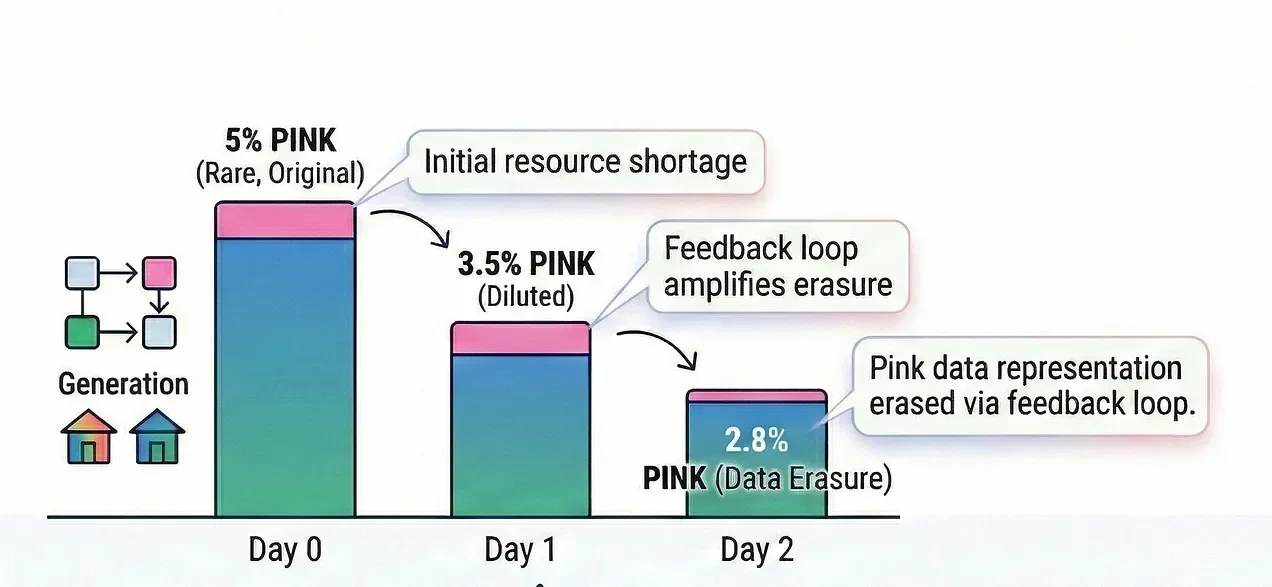

Day 0: 100 pages, 5% pink, 5 pages.

Day 1: 100 pages, 2% pink. Now the pool is 200 pages with 7 pink pages; 3.5% pink.

Day 2: Rainbow trains on the 3.5% pool, gets even more stingy, uses 1.5% pink. Now the pool is 300 pages, 8.5 pink pages, 2.8% pink.

Next, let’s say Janie takes Rainbow public. Now there are kids at other schools who do have pink crayons and really like pink hues. When these kids start using Rainbow to color their pages, Rainbow confidently underuses pink and soon, none of the kids using Rainbow are coloring with pink.

This wasn’t because of anything Rainbow or Janie intended but quite simply because there was a resource shortage of pink before Rainbow existed at VC Elementary School. Not only can these pink-loving kids not use their favorite color, but slowly, pink disappears because the initial resource inequality got baked in early and compounded on itself.

And don’t get me started about the downstream implications for Barbie and My Little Pony coloring books in this imaginary situation.

Real World Applications

By now, if you weren’t aware of systemic bias in AI, hopefully it feels a little more quantifiable. But let’s step back out into the real world.

In our coloring book example, no-one was harmed; the world just had a little less color. But start to imagine the implications in a few real-world situations which ethical AI practitioners need to grapple with: disproportionate health outcomes and economic disparity.

Health Outcomes: The NIH did not require the inclusion of women in clinical research until 1993, and there is a huge disparity in the amount of funding for research focused on women’s health. One oft-cited figure is that while heart disease is the leading killer of women, only 4-6% of the NIH’s budget goes to funding research on women’s heart health. This means as we build scientific research and breakthroughs using AI, men will benefit disproportionately. Layering in other minority groups, for instance women of color, the situation feels even more dire.

Economic Implications: In 2018, Amazon reportedly scrapped a recruitment tool that favored male resumes. Cindy Gallop, CEO of “Make Love Not Porn” and the self-proclaimed “Michael Bay of Business” has repeatedly drawn attention to the economic implication of algorithmic bias in , noting how her impressions reach a fraction of those of her male colleagues, which has drastic implications for funding. She repeatedly stresses that “Algorithmic Suppression = Economic Oppression.”

Algorithmic Pricing: Apps such as Uber or DoorDash can price their services at the individual level using predictions on your willingness to pay. Products marketed to women are already often more expensive, labeled the “Pink Tax.” So, now, it’s possible the algorithms will pick up on times of high need that are gender-driven, like late-night headache medicine delivery during our periods. This extends to individuals with disabilities for whom the need for delivery services is higher.

How To Control For Bias and Fairness

What could be done? It’s not too late for Janie and her friends to reintroduce pink. Janie can test Rainbow’s choices and measure how often it selects each color. When she sees that pink is selected fewer times, she can tune her model’s parameters to give a more even distribution of outcomes.

But in order for Janie to ask her model about pink, she needs to care about pink. And if Janie only ever works with people from VC Elementary School, she’s never going to even see the missing color.

In other words, the people designing AI systems—the engineers, the developers, the data scientists, even the marketers—need to come from diverse backgrounds to flag what could be potential oversights from the inside. It also means we need to use AI, unabashedly call out the disparities, and endlessly write to flag unfairness and misrepresentation as it occurs.

We need to demand a seat at the table today, so that the algorithms don’t inadvertently erase the vibrant, brightly colored world our children deserve to live in tomorrow.